What is Automated Essay Scoring (AES)?

Automated Essay Scoring is a computerized assessment system that uses Artificial Intelligence (AI) and Natural Language Processing (NLP) to evaluate and score essays. It analyzes factors like grammar, vocabulary, structure, and overall coherence, comparing each response against a pre-defined rubric to assign a score like a human would.

While AES may seem like a recent innovation, its roots go back over 50 years—enough time for it to move from relying on simple pattern recognition to today’s more sophisticated systems.

Ellis Page developed the first system, Project Essay Grade (PEG), in 1966. Progress stalled for decades, but the 1990s changed everything—better computing power unlocked smarter, more capable AES tools.

Today, modern grading apps can score multiple essays at once, deliver accurate scores, and give teachers more time to focus on personalized support.

How Does It Work? The Key Technologies Behind AES

AES tools use Natural Language Processing (NLP), machine learning, and rubric-based scoring to automate the scoring process for accuracy and consistency.

Here’s how these three technologies work to provide better scoring on essays:

Natural Language Processing (NLP)

NLP analyzes essays by examining vocabulary, grammar, and sentence structure. It goes beyond simple error detection by aiming to understand the content and context of the writing.

NLP can also identify writing patterns, detect potential plagiarism, and assess the logical flow of arguments. Some AES tools even integrate sentiment analysis to evaluate your student’s tone so it matches the subject matter and your expectations.

Machine Learning

In machine learning, AES systems train on large datasets of human-scored essays. Algorithms such as linear regression, neural networks, and deep learning models help the system predict scores based on essay features like word choice, organization, sentence variety, structure, and clarity.

Modern AES models use deep learning, such as transformer-based models such as GPT, to better understand text. They learn from millions of essays and become more accurate over time.

Some of the common machine learning models include:

- Linear regression (basic): A statistical model that finds patterns between essay scores and spots general grading trends.

- Neural networks (advanced, more accurate): A machine learning model that works like a human brain and analyzes deep patterns in writing for better accuracy.

- Support Vector Machines (SVM): A classification model that groups essays based on similarities to make it easier to distinguish different quality levels.

- Cubist models: A rule-based system that uses decision trees to handle complex grading rules with a logical approach.

Some AES tools even use reinforcement learning, where human graders can remodel the scoring to match their scoring standards.

Rubrics-based Scoring

AES systems follow pre-defined grading rubrics, such as those for grammar, coherence, and argument structure, to guide feedback. These rubrics ensure the AI evaluates essays consistently and produces objective, reliable scores.

Some AES tools allow you to integrate your own rubrics to fit your class needs. You have flexibility over how much weight you want to give to aspects such as thesis clarity or argument strength.

Plus, these tools can offer real-time feedback on essays, so your students can improve their writing and deliver better papers.

How Accurate is AES? Strengths and Limitations

While AES has impressive scoring capabilities, it also has limitations, especially when it comes to evaluating elements like creativity and original thought. Let’s break down its strengths and weaknesses.

Strengths

AES excels at efficiency, consistency, and scalability—three qualities that make it a powerful essay scoring assistant.

Speed and Efficiency

AES tools allow you to score your entire class’s essays within minutes instead of hours. That’s time you save to focus on providing better student support, which benefits everyone in the classroom.

All you need to do is upload your essays and the system will handle the rest:

- The AES compares each essay to thousands of human-scored examples it has already learned from.

- The system scans for grammar, structure, vocabulary, and logical flow using AI and natural language processing (NLP).

- Lastly, the AES instantly assigns a score and, in many cases, gives basic feedback that students can act on immediately.

That’s time saved to focus on what really matters: one-on-one support, lesson planning, or just catching your breath.

Still, teachers wonder if this speed and efficiency are ethical. Kwame Anthony Appiah, Professor of Philosophy and Law at New York University, addresses this concern in his New York Times’ Ethicist column:

"Time saved in evaluating the papers might be better spent on other things — and by ‘better,’ I mean better for the students,” says Appiah. “It's not hypocritical to use A.I yourself in a way that serves your students well.”

Consistency and Objectivity

Even the most dedicated teacher will sometimes fall prey to unconscious bias, especially when grading dozens of essays under time pressure. Fatigue, handwriting, and prior knowledge of a student's identity and skill level can all unintentionally influence scoring.

That’s where AES helps. It applies the same rubric to every essay, every time—no distractions or assumptions. Instead of replacing your judgment, it supports fairer scoring by offering a consistent starting point.

You score students based on the quality of their writing and not on subjective factors.

Scalability

When you're faced with hundreds — or even thousands — of essays, manual scoring becomes a logistical challenge. It's slow, expensive, and nearly impossible to keep completely consistent at scale.

AES shines here. For large assessments like standardized tests or district-wide evaluations, it delivers fast, consistent, and objective scoring — without burning out your team.

It’s not about replacing educators, but about handling volume so teachers can focus on impact.

Limitations

Despite its advantages, AES also comes with some notable drawbacks you can’t ignore, such as its inability to assess creativity, depth of analysis, and the unique voice of each student, which are things that matter in good writing:

Surface-level Assessment

While AES tools are good at evaluating standard aspects of essays, such as grammar and structure, they may struggle with deeper aspects, such as creativity.

This is why you should use AES primarily as an initial step in the essay evaluation process. You can then do a second review to review aspects like thesis statements, depth of analysis, and argument strength, all elements that require human judgment for more nuance.

Lack of Human Touch

While AES is objective, it does not fully understand the nuance of teaching essay writing, such as tone, voice, or unique perspectives.

One approach is providing actionable feedback in areas the AES may overlook. For example, you can offer personal insights on recurring mistakes your student makes or highlight unique strengths in their writing that the system might miss.

What Are the Best AES Tools? A List of Top 4 Options

There are many tools available that promise to improve scoring efficiency, making it hard to decide which one to choose. Here’s a list of top tools on the market to automate your essay scoring:

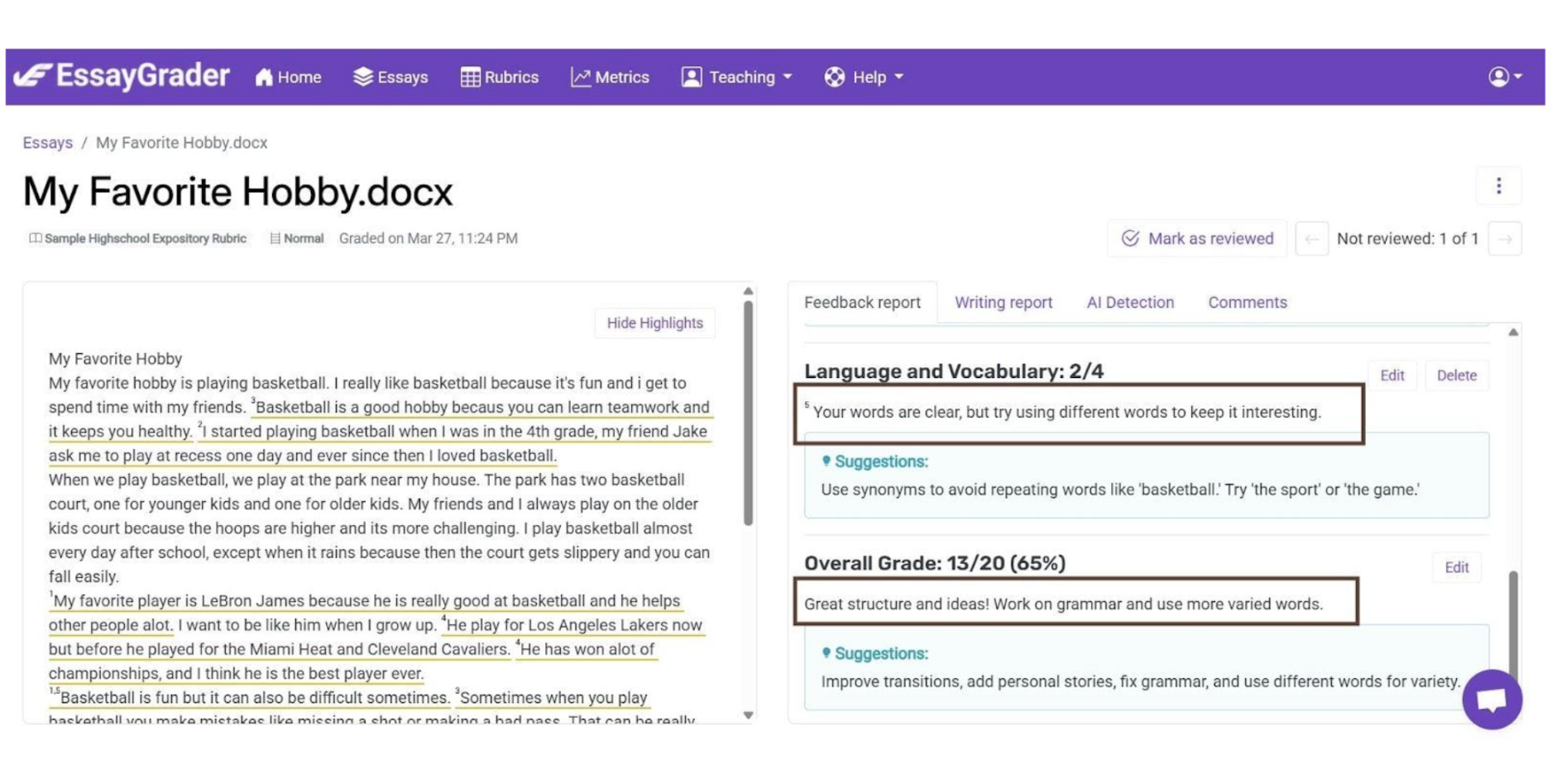

1. EssayGrader

EssayGrader is the most accurate AI scoring platform, trusted by 30,000+ educators worldwide. It cuts scoring time by 80% and provides consistent and transparent feedback.

Unlike other AES tools, we built EssayGrader to meet the needs of teachers, not just large institutions.

Key Features:

- Bulk upload: EssayGrader can process your entire class’s essays in 2 minutes or less. It gives you time to focus on more personal support for students and maintain a better work-life balance outside the classroom.

- Advanced AI for human-like scoring: EssayGrader uses modern transformer-based AI models to evaluate logical flow, voice, clarity, and tone. It makes scoring align with how educators assess writing quality.

- Real-time, scalable scoring: EssayGrader instantly processes bulk assignments, making it perfect for any classroom size. Whether you're reviewing 5 or 500 essays, you get fast, consistent results.

- Custom rubrics: You can create custom rubrics to ensure consistent, unbiased grading. You also gain access to 16 preset rubrics that align with your curriculum.

As a bonus, EssayGrader comes with plagiarism detection to determine if your student’s essay is 100% original. It can also detect if your student used AI to generate their essays.

Unlike other AES tools that focus solely on scoring, EssayGrader.ai provides your students with AI-powered, structured feedback on grammar, content, and structure. These suggestions help them improve their writing quickly.

EssayGrader offers direct access with a free trial, transparent scoring criteria, and flexible pricing. You don’t have to worry about complex licensing agreements either.

2. SmartMarq

Alt text: Interface view of SMartMarq’s tool

SmartMarq is a scalable scoring system for large institutions. It blends AI-driven scoring with human expertise for a more balanced assessment of your essays.

Key Features:

- AI-driven scoring: SmartMarq combines human expertise with AI-driven scoring. You have to upload the student’s graded essay, and SmartMarq’s AI will provide you with additional marks to complement your feedback.

- Custom rubrics: SmartMarq allows you to create custom rubrics that fit your needs. You can define them with different point values and performance descriptors.

- Marking rules: You can use multiple graders within SmartMarq to ensure fairness and resolve disagreements. SmartMarq also allows you to control visibility so graders can only view their own students or keep all submissions anonymous.

The issue with SmartMarq is that it doesn’t include plagiarism detection technology that confirms whether your student’s essay is truly the result of their writing.

Also, SmartMarq’s scoring process is slower than EssayGrader since it requires human input first. And if you don’t have the time to create your rubrics, it doesn’t come with pre-set rubrics you can use.

3. ETS e-Rater Scoring Engine

The ETS e-Rater Scoring Engine combines AI and human input to deliver personalized feedback. Educators and testing organizations frequently use it for standardized exams like the GRE and TOEFL.

Key Features:

- Automated essay feedback: e-Rater Scoring Engine provides a complete score of your student’s essay based on various writing features like grammar, style, and organization.

- Natural Language Processing (NLP) and AI technology: The engine uses AI and NLP to analyze key aspects of writing such as vocabulary, grammar, and lexical complexity. This technology ensures that feedback is accurate and relevant to the writing prompt.

- Off-Topic detection: e-Rater Scoring Engine flags essays that are off-topic or inconsistent with the prompt. It also evaluates the structure of arguments and the creative use of language.

Despite its strengths, e-Rater lacks direct integration with common LMS platforms like Google Classroom and Canvas. Teachers must rely on third-party tools like Gradescope for access.

A second disadvantage is that ETS designed e-Rater primarily for standardized testing rather than everyday classroom use. Their system is also proprietary and not directly accessible to teachers or students.

4. IntelliMetric

IntelliMetric is known for adaptive feedback and the ability to detect irrelevant or off-topic submissions. It helps maintain scoring accuracy while reducing time spent reviewing essays individually.

Key Features:

- AI-Powered Scoring: IntelliMetric delivers highly accurate and consistent scoring, often surpassing human experts. This makes it a reliable tool for large-scale assessments in education.

- Instant Feedback and Scoring: The tool scores prompts instantly with quick feedback, saving you valuable time so you can focus on other important teaching tasks.

- Plagiarism Detection: IntelliMetric’s plagiarism detection verifies that your student’s content is 100% original. As a result, you get peace of mind about the paper’s authenticity.

A major limitation of IntelliMetric is that you can’t create custom rubrics within the software. Therefore, it might not be flexible enough depending on your scoring needs.

Should You Use It? When AES is Useful and When You Still Need Human Feedback

We want to make it clear: the role of AES is to assist and not replace teachers in their essay evaluations. While it automates scoring based on a predefined rubric or numerical value, human feedback is still essential for guiding students' growth.

Here’s when AES helps automate scoring to make you more efficient and when a human needs to intervene to refine its feedback:

When AES is Useful

AES can be a valuable tool when used in specific contexts, such as automating the typical manual work of the scoring process:

Initial Scoring

AES can serve as a first-pass scoring system before teachers provide their human feedback on specific areas. That way, teachers can spend less time on routine scoring and more time helping students refine their arguments.

“The best way to look at AI for grading is as a teaching assistant or research assistant who might do a first pass … and it does a pretty good job at that,” says Diane Gayeski, professor of strategic communications at Ithaca College, in a CNN Business article.

Large Classes

AES is useful for teachers who need to score essays for large classes quickly and efficiently. Instead of spending hours manually evaluating each paper, AES can provide initial scores, identify potential errors in structure or grammar, and assign scores based on predefined criteria.

Routine and Objective Essays

AES can provide reliable scores on essays that focus on grammar, spelling, and factual accuracy.

For assignments that primarily assess a student’s knowledge rather than their ability to think creatively, AES can be a faster and more effective scoring tool. For example, standardized tests that require well-structured responses based on specific prompts benefit from AES's efficiency.

When You Still Need Human Feedback

Despite its advantages, AES still falls short in some areas, where your human feedback is irreplaceable:

Creative or Subjective Writing

AES tools might be good at evaluating grammar, structure, and sentence coherence, but they can’t fully grasp creative thinking or emotional depth. Essays that require originality and creativity still need the oversight of a human teacher for nuance.

Creative writing often involves humor, metaphor, and cultural context. These are elements that only a human can interpret and assess accurately.

Personalized Support

To help your class improve their writing, you must still provide each student with personalized support to help them grow. AI can assist with scoring, but real progress comes from thoughtful feedback and guidance.

For example, Diane Gayeski runs part of her students’ essays through ChatGPT to ask the AI tool to provide initial scoring and suggestions for improving their writing. After getting the feedback, she adds her comments for her students. “[For example], I’ll share what I think about their intro, too, and we’ll talk about it,” continues Gayeski.

An Additional Consideration for Educators: Data Privacy

Instructors should be mindful of the data privacy policies of AES tools. For example, does the system keep student essays forever? Does the tool use the essay submissions for training without permission?

These factors are non-negotiable if you want to protect your students’ confidentiality. You should look for AES platforms that are transparent about the way they handle and protect your students’ work.

At EssayGrader, we prioritize data privacy. Our clear policies on data use, storage, and protection provide both students and teachers with peace of mind.

We're FERPA compliant and follow industry-standard security protocols to keep student data safe, including encryption. Additionally, we never share or sell student data, so you can be sure that their academic work remains private and secure.

It’s Time to Embrace the Future of Essay Scoring

AES saves time and improves consistency, especially in large-scale assessments. However, teachers should pair it with human oversight to guarantee fairness and provide personalized feedback.

Start by trying out AES tools like EssayGrader to save time on scoring while still giving personalized feedback to strike the right balance between efficiency and quality. With our 30-day free trial, you can experience firsthand how much time you can save on scoring.

Try EssayGrader for free and take the first step towards the future of essay scoring!

.avif)

.avif)